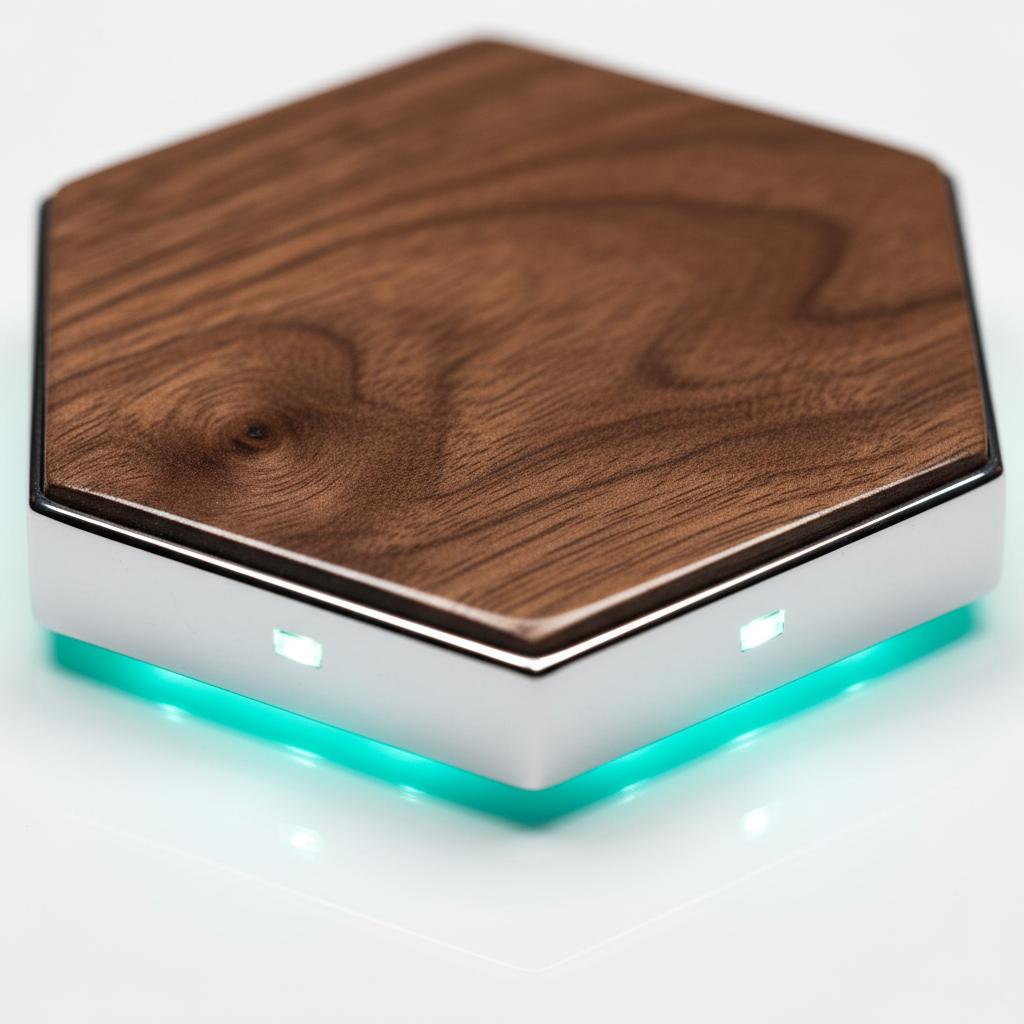

OFFLINE AI. UNCOMPROMISED.

The Micro Vault is a plug-and-play on-device intelligence workstation that runs entirely on your desk. No cloud. No subscriptions. No compromises.

The Micro Vault is a plug-and-play on-device intelligence workstation that runs entirely on your desk. No cloud. No subscriptions. No compromises.

Every query you send to the cloud is a dependency. On uptime. On pricing. On someone else's privacy policy. The VAULT eliminates all three.

"The moment your intelligence lives on your hardware,

it answers to you — and only you."

Cloud private intelligence is borrowed intelligence. Rate-limited, retention-tracked, and one outage away from silence. Private intelligence on your desk is yours — always on, always private, answering at full speed whether or not the internet exists.

No driver installs. No stack configuration. No weekend lost to debugging.

Plug into power and ethernet. The VAULT auto-detects your GPU, verifies pre-loaded models, and starts all services automatically.

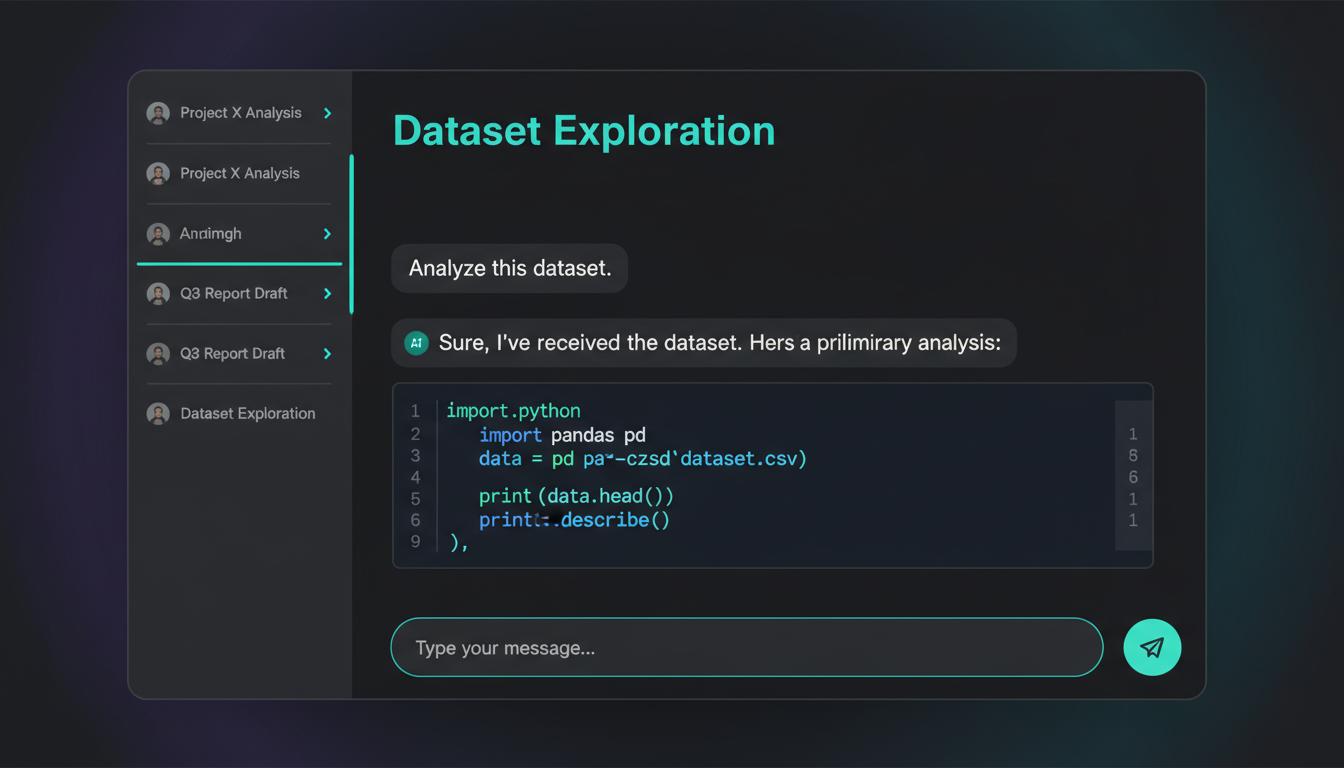

Navigate to localhost:3000. A guided welcome wizard appears — choose your use case, connect optional services.

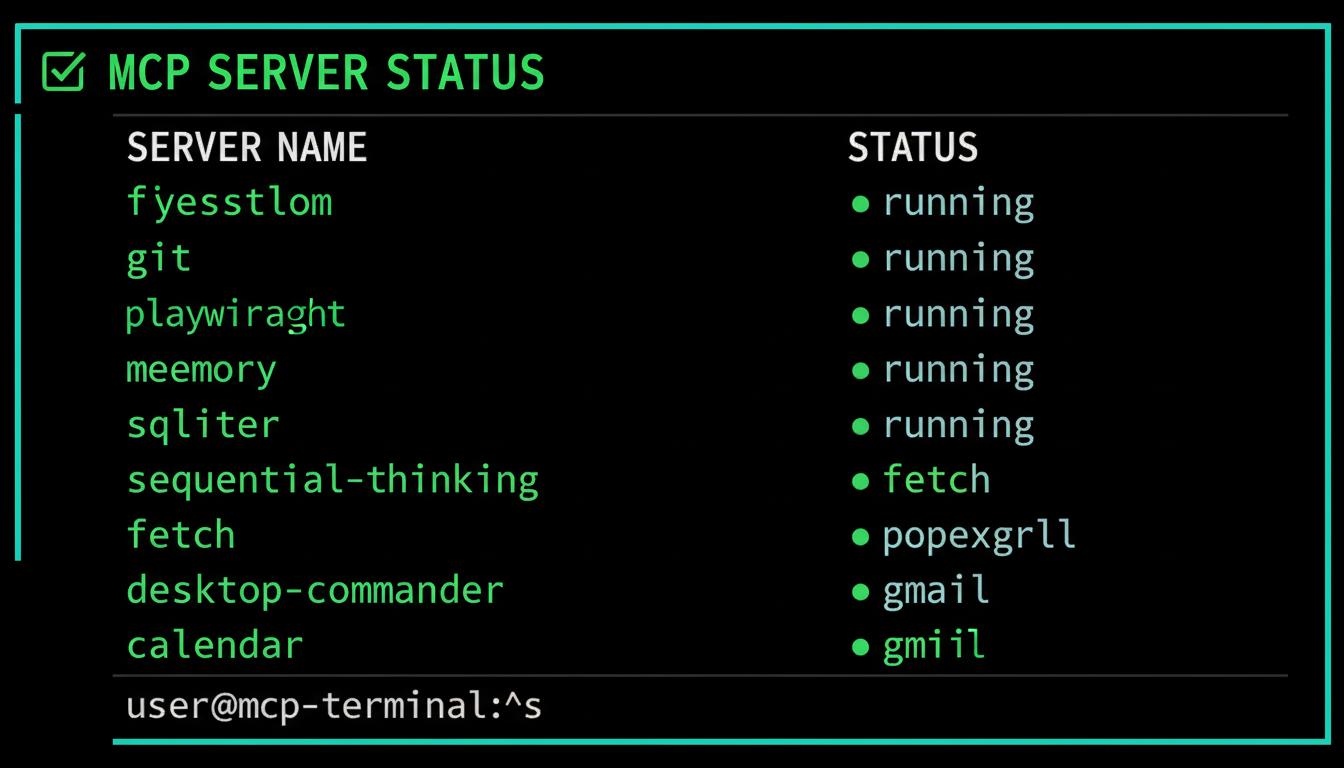

Full local inference. Persistent memory. 12 MCP servers active. All models preloaded. Zero configuration required.

DIY local inference takes 4–8 hours for a skilled developer. VAULT ships pre-configured, pre-tested, and ready on first boot.

Filesystem, Git, browser automation, database, memory, sequential thinking — all pre-wired, tested, and verified before your machine ships.

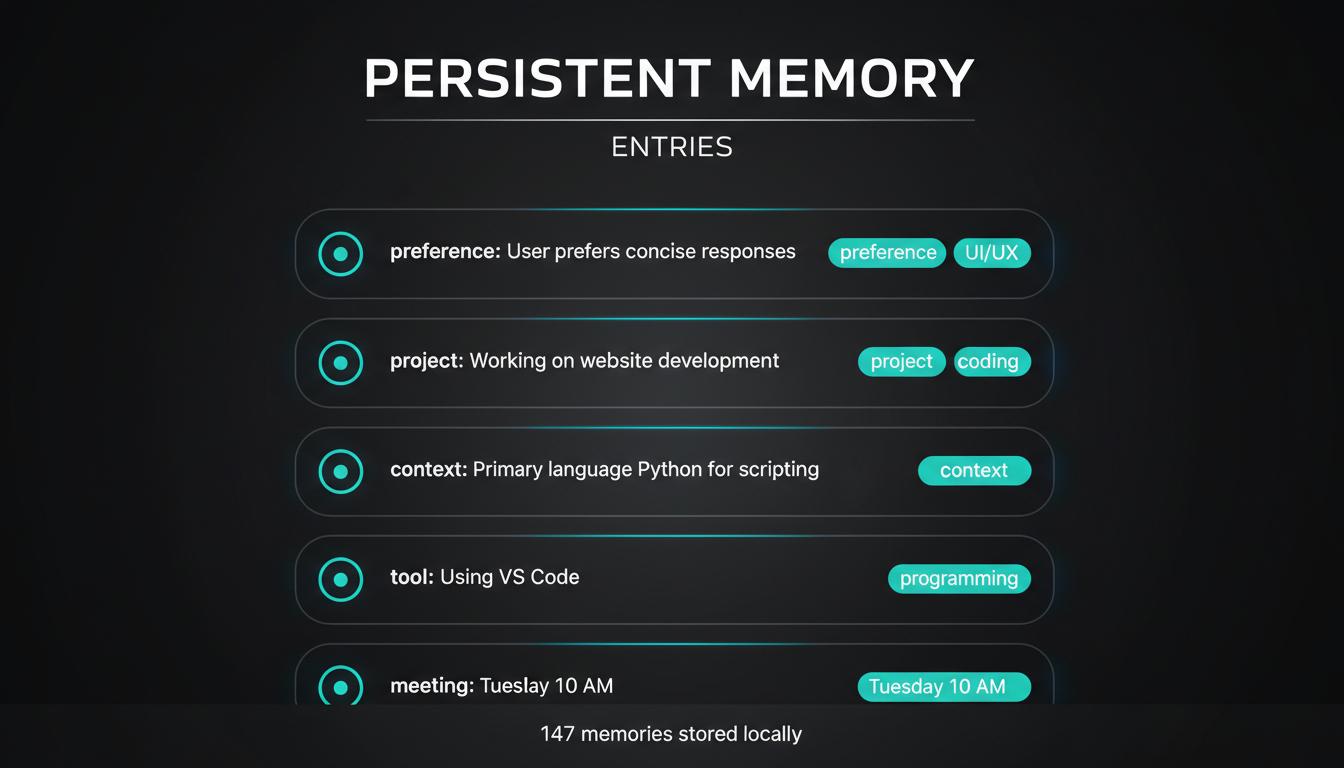

Switch between models freely. Your context follows you. No re-explaining. No 24-hour sync delays. Memory that's truly yours — stored locally, never shared.

Watch what 3 lbs of precision hardware delivers.

It arrives. Silent. Ready.

Pre-installed. Pre-tested. Pre-yours.

One desk. Full speed. No cloud.

Hardware that outpaces the competition. Software that just works. Memory that never forgets. All three running on your desk, offline, under your control.

Micro Vault — 18L Mini-ITX. RTX 3090 or RTX 5070 Ti.

Full desktop GPU. Fits on your desk.

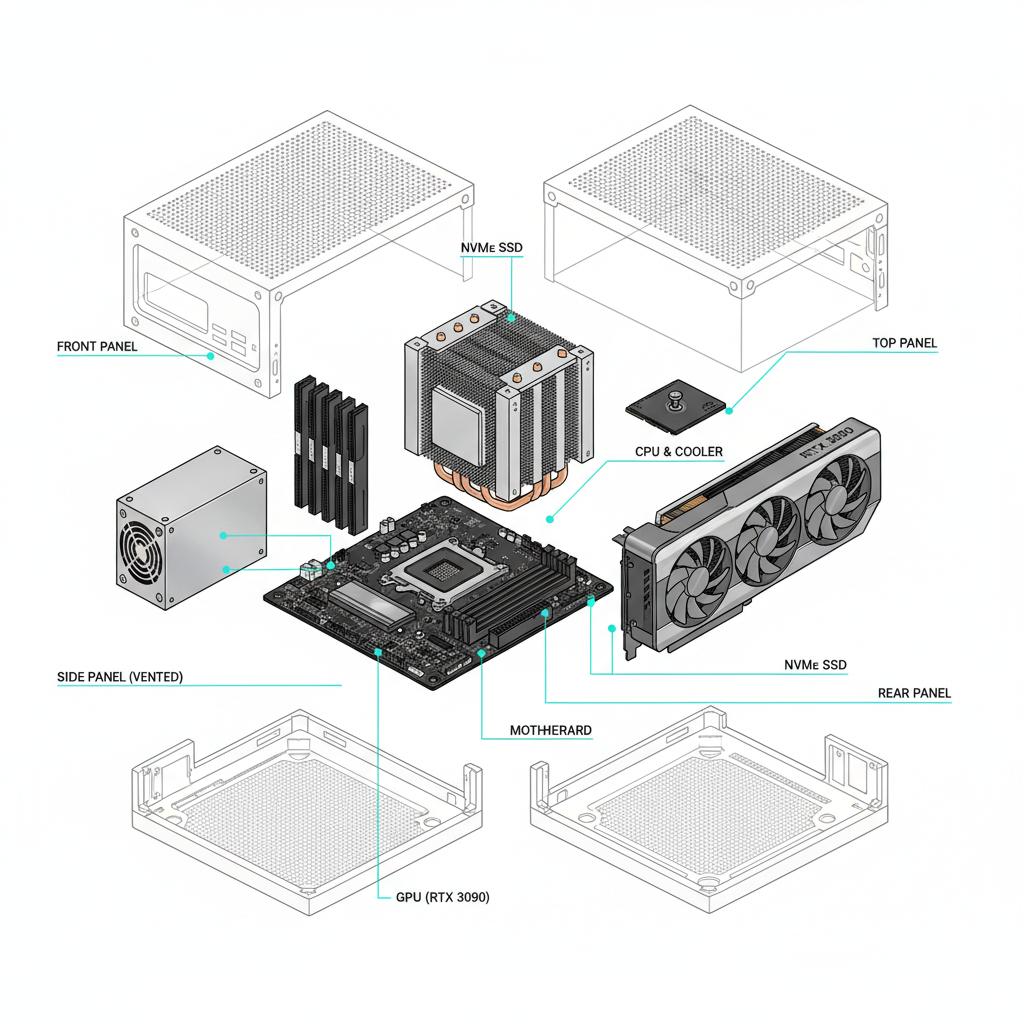

Dedicated NVIDIA GPU with up to 936 GB/s memory bandwidth — 3.4× faster than unified memory. Full PCIe x16 slot. Standard AM5 socket. Every component upgradeable with a screwdriver.

Open WebUI. Ollama. n8n automation. Playwright browser agents. Goose dev agents. 12 pre-configured MCP servers. Every component tested and verified before your machine ships.

Mem0 OpenMemory stores your context locally — surviving every session, every model switch, every reboot. Switch from Qwen to Llama to DeepSeek. Your memory follows you everywhere.

A prompt, a document, a codebase, a voice command. Raw input from your environment — nothing preprocessed or filtered by a third-party server.

Your RTX GPU runs inference entirely on-device. No packets leave your network. No API key required. No rate limits. Full VRAM dedicated to your request.

A response generated by local models running at 90–110 tok/s. Stored to persistent memory. Available the next time you open the app — no context window to re-fill.

Every stat below is documented and reproducible. We compared directly against the Mac Mini M4 Pro — the machine most developers currently use for local inference.

These aren't hypothetical problems. They're documented failures reported thousands of times across Reddit, GitHub Issues, and developer forums. The Micro Vault was designed to solve every one.

~12% of MCP installations fail due to missing configuration. Different installation methods require incompatible formats — but this is undocumented. You spend hours debugging what should take minutes.

12 MCP servers ship pre-configured and tested. Every server verified by hand before your machine leaves our facility. Filesystem, Git, browser automation, database, memory — all active on first boot.

Auto-compaction silently drops critical instructions. The /resume command recovers only 20–40% of original context. Users spend 5+ hours per week re-explaining the same context to cloud tools.

Mem0 persistent memory survives every session, every model switch, every reboot. Switch between Qwen, Llama, and DeepSeek freely. Your context follows. No re-explaining. No 24-hour sync delays. Your memory — stored locally, never shared.

Cloud private intelligence retained consumer data up to 5 years. 233+ outages tracked since October 2025. You have no fallback when the internet is slow, restricted, or controlled by someone else's policy.

No data leaves your machine. Ever. No cloud dependency. No retention policies. No outages. The VAULT operates fully offline — HIPAA, GDPR, and EU AI Act ready from the moment it boots.

Both tiers ship with the full VAULT software stack — pre-configured, pre-tested, ready on first boot.

The value champion. 3–5× faster than Mac Mini. Runs 8B–32B models in VRAM.

Future-proof architecture. Blackwell GPU. 64GB RAM for concurrent models and larger context.

All components are standard and upgradeable. Both machines use AM5, DDR5 DIMMs, PCIe x16, and M.2 NVMe. When better hardware arrives, swap it in — no proprietary parts, no soldered surprises.

The Mac Mini solders everything shut. The VAULT uses standard AM5, DDR5, PCIe x16, and M.2 — the same sockets every PC builder has used for years. Your system grows with the technology, not against it.

Your workstation evolves with the field of local intelligence. Not the other way around.

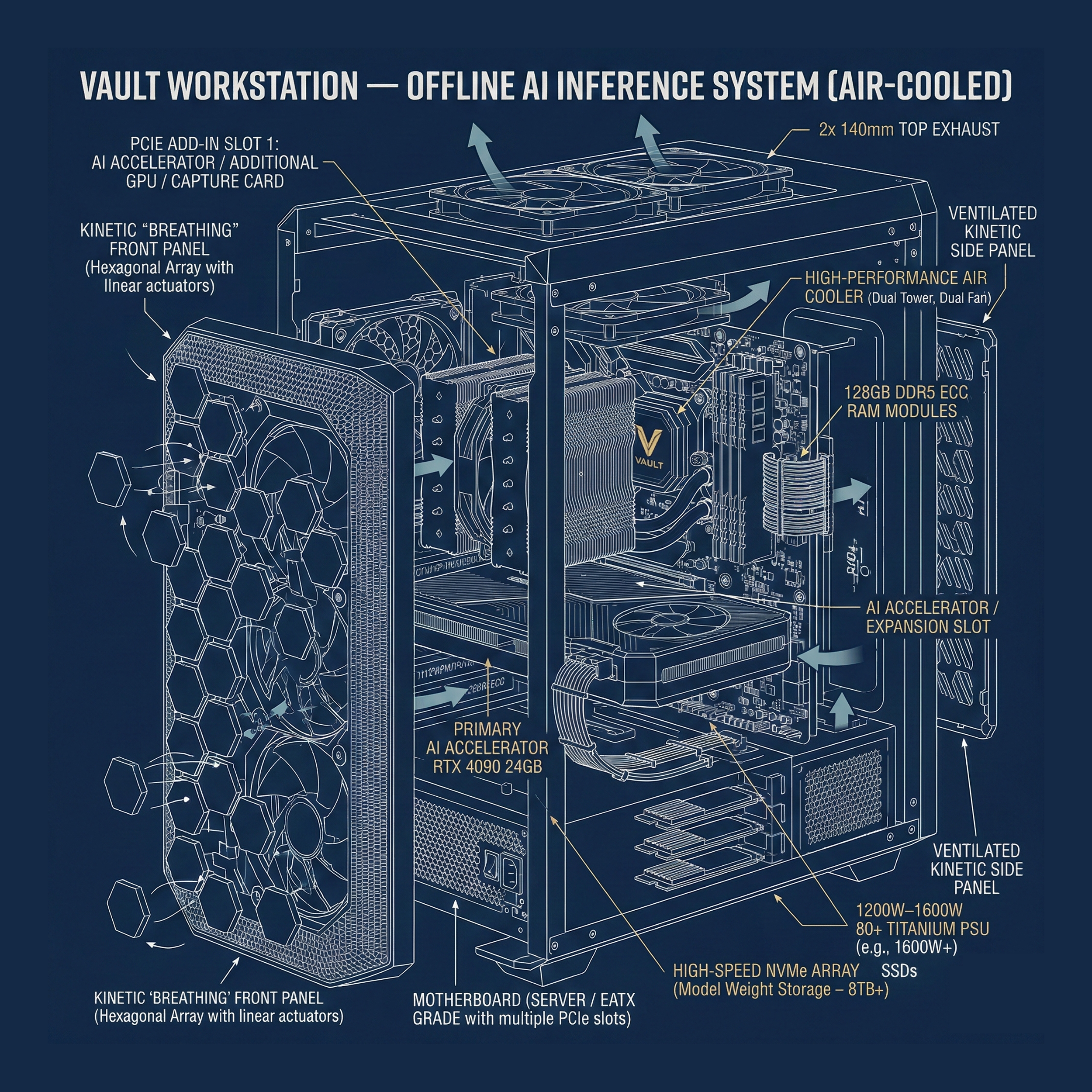

The patent-pending VAULT architecture was designed from the ground up for regulated industries, sensitive workflows, and anyone who believes their data belongs to them.

No PHI ever leaves the device. Inference runs entirely on-device with no cloud transmission path.

No data processed outside your jurisdiction. No third-party processors. No DPA required.

Hardware-enforced data containment. Full audit trail via local logs. No provider dependency for compliance.

Air-gapped operation by design. No model weights transmitted. All inference on controlled hardware.

"Patent-pending architecture with hardware-enforced one-way data link. No cloud private intelligence can match this level of containment."

The Micro Vault Core runs 8B models at 90–110 tok/s. A Mac Mini M4 Pro 24GB runs the same models at 20–30 tok/s. That's a 3–5× speed advantage. The RTX 3090's 936 GB/s memory bandwidth compared to the M4 Pro's 273 GB/s explains the gap. The VAULT also runs 32B models fully in VRAM — the Mac Mini 24GB cannot load them at all. The Mac Mini wins on power draw (20–40W vs. 350–400W) and noise — real tradeoffs we don't hide.

Yes — completely. All models run on-device. All software runs locally. Once your machine is set up, it operates indefinitely offline. The only time internet is useful is for pulling new models from the Ollama library or receiving software updates, both of which are optional and user-initiated.

The Core tier (RTX 3090 24GB) ships with Qwen 3 8B for general tasks, Qwen 2.5 Coder 14B for coding, DeepSeek R1 8B Distill for reasoning, and nomic-embed-text for embeddings and RAG. The Pro tier (RTX 5070 Ti 16GB) ships with models tuned for its 16GB GDDR7 VRAM. You can pull any of 1,200+ Ollama models from the UI at any time.

Yes — that's a core design principle. The Micro Vault uses a standard AM5 CPU socket (supported through at least 2027), two DDR5 DIMM slots, a PCIe x16 GPU slot, and an M.2 NVMe port. All components are standard, screwdriver-accessible, and swappable. When the RTX 6090 ships, you swap the GPU. When 128GB DDR5 kits drop in price, you add RAM. No soldering, no voided warranties, no proprietary parts.

The $100 deposit secures your place in the production queue. It is fully refundable at any time before your machine ships — no questions asked. Expected shipping is Q3 2026. You will receive email updates as your build approaches.

The full VAULT software stack includes: Ollama (inference engine), Open WebUI (browser interface at localhost:3000), AnythingLLM (document workflows), Mem0 OpenMemory MCP server (persistent local memory), 12 pre-configured MCP servers (Filesystem, Git, Playwright, Docker, SQLite, PostgreSQL, Gmail/Calendar, and more), n8n workflow automation, Goose development agents, Faster-whisper for local voice input, and Piper TTS for local voice output. All services start automatically on boot via Docker Compose.

The Micro Vault is designed to support HIPAA, GDPR, and EU AI Act compliance. Because all inference runs on-device, no patient data, personal data, or sensitive information is transmitted to any external server. There is no cloud component, no third-party processor, and no data retention by Vault AI. Organizations deploying in regulated environments should conduct their own compliance assessment — we can provide architecture documentation to support that review.

Reserve your Micro Vault today. $100 fully refundable deposit secures your place.

Expected shipping Q3 2026. No cloud account required. Ever.